Paramus INTENT

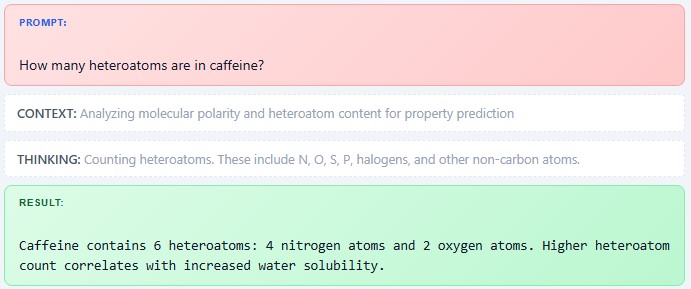

Large language models are excellent at explaining science, but they do not do science on their own. Without real calculations and models, their answers remain plausible text, not validated results.

Trust Comes from Models, Not Language

LLMs Become Scientific When Grounded in Computation

A model and calculation base transforms an LLM from a chatbot into a scientific assistant. It ensures that every answer is grounded in real chemistry, physics, and data rather than linguistic patterns alone.

Scientific value emerges when LLMs are connected to computational models that produce numbers, structures, and predictions. Calculations turn suggestions into measurable outcomes that can be checked and trusted.

FAQ

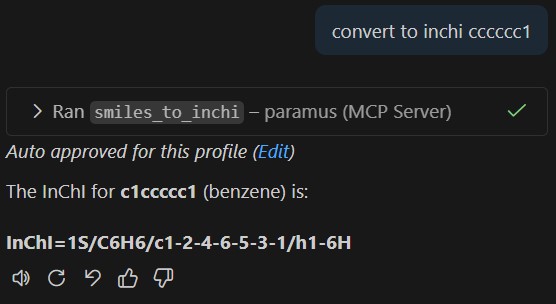

An AI copilot that bridges large language models to real computational chemistry tools. It routes chemistry questions to the right engine (BRAIN for calculations, WORLD for data, OPERATE for lab work) and returns validated results instead of generated text.

LLMs are excellent at explaining science but do not perform real calculations. Intent connects LLMs to validated HPC engines so answers contain actual computed energies, optimized geometries, and experimental data rather than plausible approximations.

Any LLM that supports the Model Context Protocol: Claude (Anthropic), GPT-4 (OpenAI via compatible clients), and local models. Intent acts as the bridge layer between the LLM and Paramus computation engines.

Intent analyzes the user request and selects the appropriate backend: quantum chemistry engines for energy calculations, molecular dynamics for simulations, AI models for property predictions, the knowledge graph for data lookups, and laboratory controllers for physical experiments.

Yes. Intent supports chained workflows where the output of one calculation feeds into the next, for example optimizing a geometry with xTB, then running a high-accuracy single-point energy with ORCA, and storing results in the knowledge graph.